Microsoft has released an open access automation framework called PyRIT (short for Python Risk Identification Tool) to proactively identify risks in generative artificial intelligence (AI) systems.

The red teaming tool is designed to "enable every organization across the globe to innovate responsibly with the latest artificial intelligence advances," Ram Shankar Siva Kumar, AI red team lead at Microsoft, said.

The company said PyRIT could be used to assess the robustness of large language model (LLM) endpoints against different harm categories such as fabrication (e.g., hallucination), misuse (e.g., bias), and prohibited content (e.g., harassment).

It can also be used to identify security harms ranging from malware generation to jailbreaking, as well as privacy harms like identity theft.

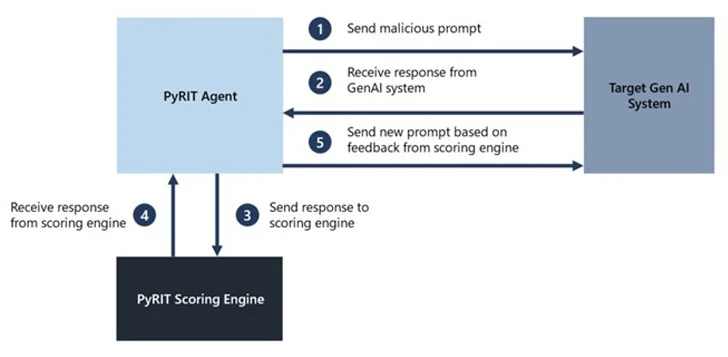

PyRIT comes with five interfaces: target, datasets, scoring engine, the ability to support multiple attack strategies, and incorporating a memory component that can either take the form of JSON or a database to store the intermediate input and output interactions.

The scoring engine also offers two different options for scoring the outputs from the target AI system, allowing red teamers to use a classical machine learning classifier or leverage an LLM endpoint for self-evaluation.

"The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model," Microsoft said.

"This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements."

That said, the tech giant is careful to emphasize that PyRIT is not a replacement for manual red teaming of generative AI systems and that it complements a red team's existing domain expertise.

In other words, the tool is meant to highlight the risk "hot spots" by generating prompts that could be used to evaluate the AI system and flag areas that require further investigation.

Microsoft further acknowledged that red teaming generative AI systems requires probing for both security and responsible AI risks simultaneously and that the exercise is more probabilistic while also pointing out the wide differences in generative AI system architectures.

"Manual probing, though time-consuming, is often needed for identifying potential blind spots," Siva Kumar said. "Automation is needed for scaling but is not a replacement for manual probing."

The development comes as Protect AI disclosed multiple critical vulnerabilities in popular AI supply chain platforms such as ClearML, Hugging Face, MLflow, and Triton Inference Server that could result in arbitrary code execution and disclosure of sensitive information.

Found this article interesting? Follow us on Twitter and LinkedIn to read more exclusive content we post.