2024-6-11 01:9:5 Author: hackernoon.com(查看原文) 阅读量:5 收藏

While applications are producing and consuming messages to and fro Kafka, you'll notice that new consumers of existing topics start emerging. These new consumers (applications) might have been written by the same engineers who wrote the original producer of those messages or by people you don't know. The emergence of these consumers is perfectly normal. However, they'll need to understand the format of the messages on the topic.

Another thing you might notice is that the format of your messages in your topic will evolve as the business needs to evolve. For example, usernames might be split into first and last names from a full name. As these things change, our data's initial schema must also change.

The schema of our domain objects is a constantly moving target, and we need to agree on the schema of the messages in whatever topic we're dealing with. This is where the Confluent Schema Registry comes in.

In this article, you'll get a comprehensive overview of the Kafka Schema Registry. You'll go through what a schema registry is while focusing on the Confluent Schema registry. By the end of this article, you'll be equipped with the knowledge needed to navigate the complexities of schema evolution in Kafka.

What is Schema Evolution?

Schema evolution is the process of managing changes to the structure of data over time. In Kafka, it means handling the modifications to the format of the messages being produced and consumed in Kafka topics.

As applications and business requirements evolve, the data they generate and consume also change, necessitating updates to the schema. These changes must be managed carefully to ensure compatibility between producers and consumers of the data.

Why is Schema Evolution Important in Data Streaming?

In a data streaming environment, schema evolution is crucial for several reasons. First, it ensures that new applications can consume data from existing topics without issues, even if they were developed after the original producers. This compatibility is essential for scaling applications and integrating new features without disrupting existing workflows.

Secondly, schema evolution allows for the natural growth and adaptation of data models to meet changing business requirements. For instance, as new features are added to an application, new fields may need to be added to the data schema. Properly managing these changes ensures that all applications can easily process the updated data.

Challenges of schema evolution

Managing schema evolution comes with its own set of challenges. One major challenge is ensuring backward and forward compatibility. Backward compatibility means new consumers can read data written by older producers, while forward compatibility means old consumers can read data written by new producers.

Achieving this compatibility requires careful planning and often involves using a schema registry, like the Confluent Schema Registry, to manage and validate schema versions.

Another challenge is handling the complexity of schema changes. Changes can range from simple additions of new fields to more complex alterations like changing data types or splitting fields.

Each type of change needs to be managed to avoid breaking data processing pipelines. For example, adding a new field is typically straightforward, but changing a field's data type can have significant implications for data consumers.

What is a Schema Registry?

A schema registry is a centralized repository for storing and managing data schemas. It runs as a standalone server process on an external machine.

The primary purpose of a schema registry is to maintain a database of schemas, ensuring consistency and compatibility of data formats across different systems and services within an organization.

Benefits of using a Schema Registry

Without a Schema registry, you wouldn't have a way of keeping up with the changes in your data schemas. Hence, it provides an organized collection of defined schemas for your data.

Aside from that, there are a few other benefits of relying on a schema registry in your data architecture:

- Centralized Management: With a Schema registry, all your schemas have a single storage location, making it easier to organize and retrieve schemas when needed.

- Version Control: Schema registries typically support schema versioning. With this, you can track changes over time between your original schema and the current one.

- Compatibility Checks: Schema registries can perform compatibility checks to ensure that changes to schemas do not break existing applications. They can verify that new versions of schemas are compatible with previous versions.

- Interoperability: Using a schema registry, different systems and services can communicate more effectively, as they can all refer to the same schemas for data structure definitions. This helps in reducing errors due to mismatched data formats.

- Documentation: Schemas stored in a registry can serve as documentation for the data structures and schema types used within an organization, helping developers understand the data formats without referring to scattered documents.

Popular Schema Registries

Although the focus of this article is the Confluent Schema Registry, it is important to note that there are other types of Schema registries, which include:

- AWS Glue Schema Registry: AWS Glue is a fully managed schema registry that supports Avro, JSON Schema, and Protobuf.

- Azure Schema Registry: The Azure Schema Registry is a part of Azure Event Hubs and supports the Avro format.

Confluent Schema Registry

The confluent schema registry offers a RESTful interface for storing and managing schemas. It is a fully managed schema registry that currently supports Avro, JSON Schema, and Protobuf schemas.

The confluent schema registry defines a scope in which schemas can evolve, known as a subject. By default, the subject name is derived from the Kafka topic name, but you can modify this on a per-topic basis.

The confluent schema registry keeps track of different versions of schemas, allowing you to manage changes over time without breaking existing systems.

Key Features of Confluent Schema Registry

Some key features common to the Confluent Cloud Schema Registry include:

- Unique ID: Each schema registered in the Schema Registry is assigned a unique ID. This ID is embedded in the Kafka message, allowing consumers to retrieve the correct schema for deserialization. This ensures that both producers and consumers are always in sync with the schema used, reducing the risk of data interpretation errors.

- Versioning: Schema Registry supports schema versioning, allowing schemas to evolve over time. Each time a schema is updated, a new version is created and stored alongside previous versions. This makes it possible to maintain backward and forward compatibility, ensuring that updates to the schema do not disrupt existing consumers.

- Multi-Format Support: As earlier stated, this schema registry supports multiple data serialization formats, including Avro, JSON Schema, and Protobuf. This flexibility allows organizations to choose the serialization format that best suits their needs while still benefiting from schema management and validation.

- REST API: It provides a RESTful interface for managing schemas. This API allows users to register new schemas, retrieve existing schemas, check compatibility, and manage schema versions programmatically. The REST API also makes integrating the Schema Registry with other tools and automation scripts easy.

How does it work?

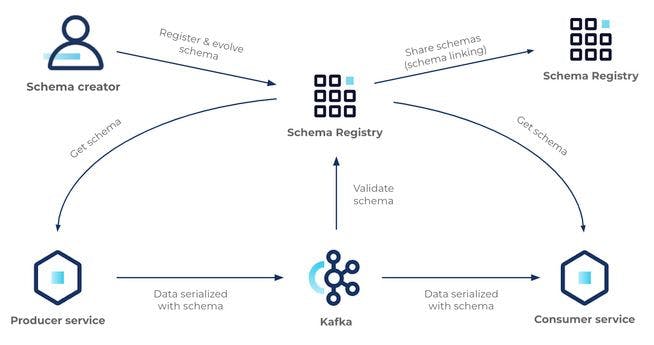

The Confluent Schema Registry operates through the API, which both producers and consumers of Kafka messages use to ensure compatibility with existing schemas.

Before sending data to Kafka, producers check if their message schema is compatible with previous versions. This is done by calling the Schema Registry API at production time.

When consuming messages, consumers check if the schema of the incoming message matches the expected schema version.

The Schema Registry compares the new schema with the last produced schema:

- If it matches, the production succeeds.

- If it's different but still adheres to the defined compatibility rules for the topic, the production also succeeds.

- If it violates the compatibility rules, the production fails, and the application is notified of the failure, allowing for responsible handling of the error.

On the other hand, if a consumer reads a message with an incompatible schema, the Schema Registry prevents it from consuming the message, avoiding potential errors down the line.

The Schema Registry provides feedback on compatibility issues, preventing runtime failures whenever possible. This makes handling schema evolution easier by catching issues early and providing clear error messages.

To minimize latency, schemas are cached locally by both producers and consumers. Once a schema is checked and validated, it is stored locally using an immutable ID, reducing the need for repeated round trips to the Schema Registry.

Serialization & Deserialization

Serialization & Deserialization (SERDE) involves converting data into a byte format for transfer over the network or saving to disk (serialization) and converting it back into a usable format (deserialization).

When transmitting data over a network or storing it, encoding the data into bytes is essential. Initially, serialization methods were often specific to programming languages, such as Java serialization.

However, these methods made it difficult to consume data in different languages. The evolution of serialization has led to more language-agnostic formats like JSON, but without strictly defined schemas, these formats present significant drawbacks, such as lack of structure and overhead.

To address these issues, cross-language serialization libraries like Avro, Protocol Buffers (Protobuf), and JSON Schema have emerged. These libraries require formally defined schemas, which specify the structure, type, and meaning of the data, allowing for more efficient encoding and a clear "contract" between data producers and consumers.

How Does Serialization & Deserialization Work?

- Serialization: Using the Avro schema, you can serialize a Java object (Plain Old Java Object, or POJO) into a byte array. This process converts the structured data into a compact binary format suitable for transmission or storage.

- Deserialization: When reading the data back, the Avro schema is used again to deserialize the byte array back into a Java object. Since the schema is provided at decoding time, metadata such as field names doesn't need to be explicitly encoded in the data, making the process efficient and compact.

What does a Schema Definition look like?

As earlier stated, the Confluent Schema Registry supports three serialization formats: JSON Schema, Avro, and Protobuf. Each format allows you to define the schema of your data objects in a way that is both human-readable and source-controllable.

Interface Description Language (IDL)

Depending on the serialization format, you can describe a schema using an Interface Description Language (IDL). An IDL is a text file format that outlines the structure of the data. This makes managing and tracking schema changes easy using version control systems like Git.

In Avro, you can write a .avsc file, which is a JSON-formatted file describing the schema. Additionally, there are tools available that can take this IDL and generate code for various programming languages.

For instance, if you're using Java, Maven and Gradle plugins can convert the Avro schema into Java objects. This tooling pathway helps prevent runtime failures due to schema evolution and fosters collaboration around schema changes by centralizing them in a single file.

Below is an example of what an Avro .avsc file looks like:

{

"type": "record",

"name": "User",

"namespace": "com.example",

"fields": [

{

"name": "id",

"type": "int"

},

{

"name": "name",

"type": "string"

},

{

"name": "email",

"type": "string"

},

{

"name": "age",

"type": ["null", "int"],

"default": null

},

{

"name": "address",

"type": {

"type": "record",

"name": "Address",

"fields": [

{"name": "street", "type": "string"},

{"name": "city", "type": "string"},

{"name": "zipcode", "type": "string"}

]

}

}

]

}

This schema defines a user record with an ID, name, email, optional age, and an embedded address record containing street, city, and zip code fields.

Below is a breakdown of this Avro schema:

- type: Indicates that this is a record (a complex data type with named fields).

- name: The name of the record.

- namespace: A namespace to avoid name conflicts.

- fields: An array of field definitions, each with:

- name: The name of the field.

- type: The type of the field. This can be a primitive type (e.g., "int", "string") or a complex type (e.g., another record).

- default: An Optional field; it provides a default value for the field if it is not present.

Registering this Schema with the Confluent Schema Registry

To register this schema with the Confluent Schema Registry, you would typically use the Confluent CLI, a REST API, or a client library.

Using the REST API, you'll have something like this:

curl -X POST \

-H "Content-Type: application/vnd.schemaregistry.v1+json" \

--data '{"schema": "{\"type\": \"record\", \"name\": \"User\", \"namespace\": \"com.example\", \"fields\": [{\"name\": \"id\", \"type\": \"int\"}, {\"name\": \"name\", \"type\": \"string\"}, {\"name\": \"email\", \"type\": \"string\"}, {\"name\": \"age\", \"type\": [\"null\", \"int\"], \"default\": null}, {\"name\": \"address\", \"type\": {\"type\": \"record\", \"name\": \"Address\", \"fields\": [{\"name\": \"street\", \"type\": \"string\"}, {\"name\": \"city\", \"type\": \"string\"}, {\"name\": \"zipcode\", \"type\": \"string\"}]}}]}}' \

http://localhost:8081/subjects/User-value/versions

This command registers a schema with the Confluent Schema Registry by sending a POST request to the Registry's REST API. Below is an explanation of the code:

-X POST: Specifies that this is a POST request.-H "Content-Type: application/vnd.schemaregistry.v1+json": Sets the content type to indicate that the data being sent is a JSON schema.--data: Provides the JSON payload that contains the schema definition.

This schema defines a User record with fields for id, name, email, age, and an embedded address record, which includes street, city, and zipcode.

When the curl command is executed, it sends this schema to the Schema Registry at http://localhost:8081/subjects/User-value/versions, registering it under the subject User-value.

Conclusion

This article provides a comprehensive guide on the Confluent Schema Registry. It outlines the importance of schema evolution in data streaming, its challenges, and a schema registry's role in addressing them.

The Confluent Schema Registry offers features like centralized management, version control, compatibility checks, and support for multiple serialization formats (Avro, JSON Schema, Protobuf).

No matter how good of a job you do up front in defining your schema, remember that as the world changes, your schemas will change. You need a way of managing those evolutions internally, and the Confluent Cloud Schema Registry helps you with these things.

如有侵权请联系:admin#unsafe.sh