2024-7-16 04:39:42 Author: hackernoon.com(查看原文) 阅读量:6 收藏

Thank you for the valuable input and feedback from Zhenyang at Upshot, Fran at Giza, Ashely at Neuronets, Matt at Valence, and Dylan at Pond.

This research seeks to unpack critical areas in AI that are relevant to developers in the field and explore the potential burgeoning opportunities in the convergence of Web3 and AI technologies.

TL;DR

Current advancements in AI-centric decentralized applications (DApps) spotlight several instrumental tools and concepts:

- Decentralized OpenAI Access, GPU Network: AI's expansive and rapid growth, coupled with its vast application potential, makes it a significantly hotter sector than Bitcoin mining once was. This growth is underpinned by the need for diverse GPU models and their strategic geographical distribution.

- Inference and Agent Networks: While these networks share similar infrastructure, their focus points diverge. Inference networks cater primarily to experienced developers for model deployment, without necessarily requiring high-end GPUs for non-LLM models. Conversely, agent networks, which are more LLM-centric, require developers to concentrate on prompt engineering and the integration of various agents, invariably necessitating the use of advanced GPUs.

- AI Infrastructure Projects: These projects continue to evolve, offering new features and promising enhanced functionalities for future applications.

- Crypto-native Projects: Many of these are still in the testnet phase, facing stability issues, complex setups, and limited functionalities while taking time to establish their security and privacy credentials.

- Undiscovered Areas: Assuming AI DApps will significantly impact the market, several areas remain underexplored, including monitoring, RAG-related infrastructure, Web3 native models, decentralized agents with crypto-native API and data, and evaluation networks.

- Vertical Integration Trends: Infrastructure projects are increasingly aiming to provide comprehensive, one-stop solutions for AI DApp developers.

- Hybrid Future Predictions: The future likely holds a blend of frontend inference alongside on-chain computations, balancing cost considerations with verifiability.

Introduction to Web3 x AI

The fusion of Web3 and AI is generating immense interest in the crypto sphere as developers rigorously explore AI infrastructure tailored for the crypto domain. The aim is to imbue smart contracts with sophisticated intelligence functionalities, requiring meticulous attention to data handling, model precision, computational needs, deployment intricacies, and blockchain integration.

Early solutions fashioned by Web3 pioneers encompass:

- Enhanced GPU networks

- Dedicated crypto data and Community data labeling

- Community trained modeling

- Verifiable AI inference and training processes

- Comprehensive agent stores

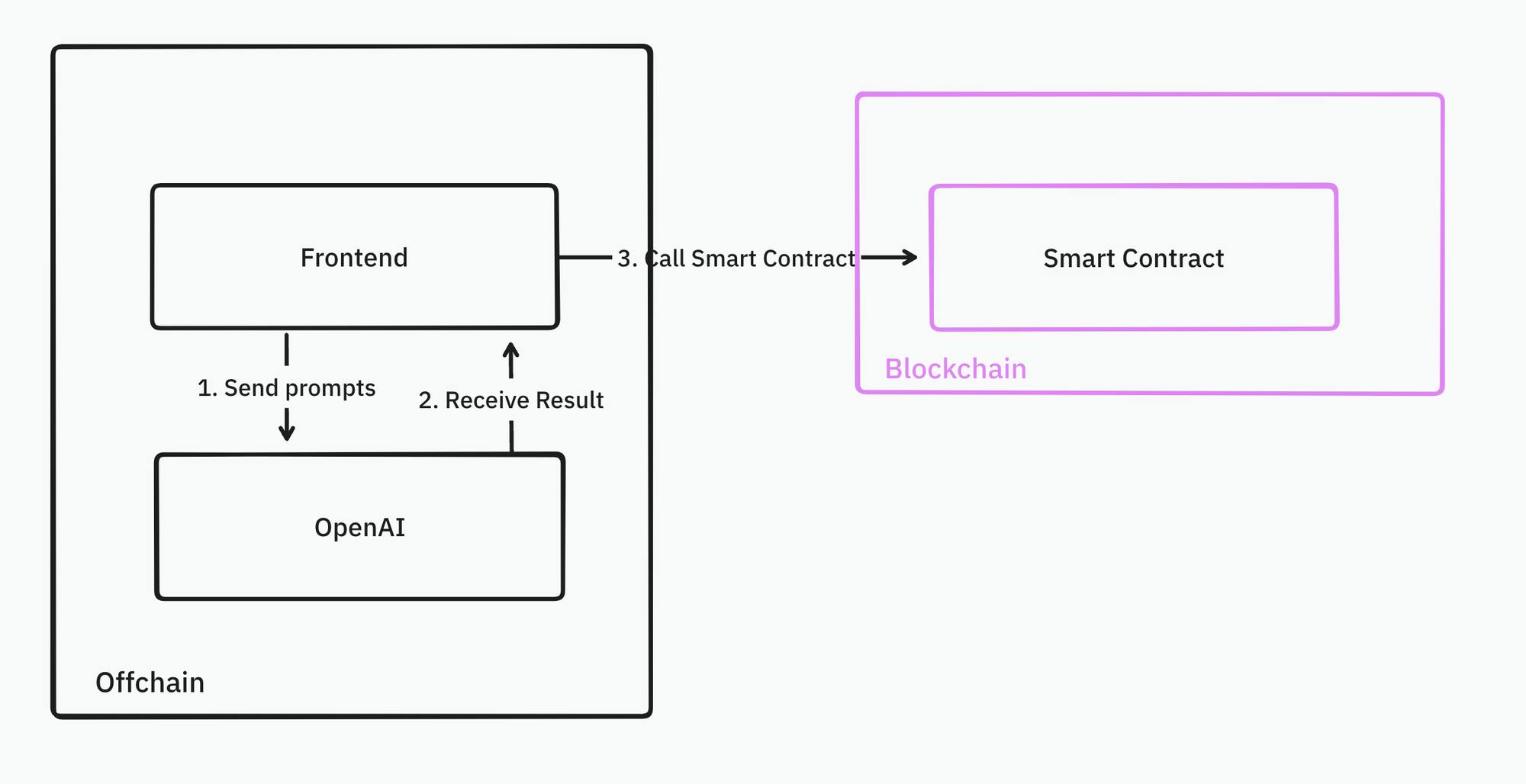

Despite the burgeoning infrastructure, actual AI application in DApps remains limited. Common tutorials only scratch the surface, often illustrating basic OpenAI API interactions within front-end environments, without fully leveraging blockchain's unique offerings of decentralization and verifiability.

As the landscape evolves, we anticipate significant developments with many crypto-native AI infrastructures transitioning from testnets to full operational status over the coming months.

In this dynamic context, our exploration will delve into the arsenal of tools available in the crypto-native AI infrastructure, preparing developers for imminent advancements akin to the groundbreaking GPT-3.5 moments in the realm of crypto.

RedPill: Empowering Decentralized AI

Leveraging OpenAI's robust models such as GPT-4-vision, GPT-4-turbo, and GPT-4o offers compelling advantages for those aiming to develop cutting-edge AI DApps. These powerful tools provide the foundational capabilities needed to pioneer advanced applications and solutions within the burgeoning AI x Web3 landscape.

Integrating OpenAI into decentralized applications (dApps) is a hot topic among developers who can either call the OpenAI API from oracles or frontends. RedPill is at the forefront of this integration, as it democratizes access to top AI models by offering an aggregated API service. This service amalgamates various OpenAI API contributions and presents them under one roof, bringing benefits such as increased affordability, better speed, and comprehensive global access without the constraints typically posed by OpenAI.

The inherent issues that cryptocurrency developers often face, like limited tokens per minute (TPM) or restricted model access due to geographical boundaries, can lead to significant roadblocks. RedPill addresses these concerns head-on by routing developers' requests to individual contributors within their network, effectively bypassing any direct restrictions from OpenAI. The below table outlines the stark differences in capabilities and costs between RedPill and OpenAI:

|

RedPill |

OpenAI | |

|---|---|---|

|

TPM |

Unlimited |

30k - 450k for most users |

|

Price |

$5 per 10 million requests plus token incentives |

$5 per 10 million requests |

|

RPM (Requests Per Minute) |

Unlimited |

500 - 5k for most users |

GPU Network

Besides utilizing the OpenAI API, developers can host and run their models on decentralized GPU networks. Popular platforms like io.net, Aethir, and Akash enable developers to create and manage their GPU clusters, allowing them to deploy the most impactful models—be they proprietary or open-source.

These decentralized GPU networks harness computing power from individual contributors or small data centers, which ensures a variety of machine specifications, more server locations, and lower costs. This unique structure supports developers in conducting ambitious AI experiments within a manageable budget. However, the decentralized nature can result in limited features, uptime reliability, and data privacy as shown in the following comparison:

|

GPU Network |

Centralized GPU Provider | |

|---|---|---|

|

SLA (Uptime) |

Variable |

99.99%+ |

|

Integration and Automation SDKs |

Limited |

Available |

|

Storage Services |

Limited |

Comprehensive (Backup, File, Object, Block storage and Recovery strategies) |

|

Database Services |

Limited |

Widely Available |

|

Identity and Access Management |

Limited |

Available |

|

Firewall |

Limited |

Available |

|

Monitoring/Management/Alert Services |

Limited |

Available |

|

Compliance with GDPR, CCPA (Data Privacy) |

Limited |

Partial compliance |

The recent surge in interest around GPU networks eclipses even the Bitcoin mining craze. A few key factors contribute to this phenomenon:

- Diverse Audience: Unlike Bitcoin mining, which primarily attracted speculators, GPU networks appeal to a broader and more loyal base of AI developers.

- Flexible Hardware Requirements: AI applications require varied GPU specifications based on the complexity of tasks, making decentralized networks advantageous due to their proximity to end-users and low latency issues.

- Advanced Technology: These networks benefit from innovations in blockchain technology, virtualization, and computation clusters, enhancing their efficiency and scalability.

- Higher Yield Potential: The ROI on GPU-powered AI computations can be significantly higher compared to the competitive and limited nature of Bitcoin mining.

- Industry Adoption: Major mining companies are diversifying their operations to include AI-specific GPU models to stay relevant and tap into the growing market.

As the landscape for AI and decentralized computing continues to evolve, tools like RedPill and decentralized GPU networks are revolutionizing how developers overcome traditional barriers and unlock new possibilities in AI development.

Recommendation: io.net offers a straightforward user experience especially suited for Web2 developers. If you are flexible with your service level agreements (SLAs), io.net could be a budget-friendly option to consider.

Inference Network

An inference network forms the backbone of crypto-native AI infrastructure, designed to support AI models in processing data and making intelligent predictions or decisions. In the future, it's poised to handle billions of AI inference operations. Many blockchain layers (Layer 1 or Layer 2) already provide the capability for developers to invoke AI inference operations directly on-chain. Leaders in this market include platforms like Ritual, Vanna, and Fetch.ai.

These networks vary based on several factors including performance (latency, computation time), supported models, verifiability, and price (consumption and inference costs), as well as the overall developer experience.

Goal

In an ideal scenario, developers should be able to seamlessly integrate customized AI inference capabilities into any application, with comprehensive support for various proofs and minimal integration effort.

The inference network delivers all the necessary infrastructure elements developers need, such as on-demand proof generation and validation, inference computation, inference relay, Web2 and Web3 endpoints, one-click model deployment, monitoring, cross-chain interoperability, integration synchronization, and scheduled execution.

With these capabilities, developers can effortlessly integrate inference into their blockchain projects. For instance, when developing decentralized finance (DeFi) trading bots, machine learning models can be employed to identify buying and selling opportunities for trading pairs and execute strategies on a Base DEX.

Ideally, all infrastructure would be cloud-hosted, allowing developers to upload and store their model strategies in popular formats like Torch. The inference network would handle both the storage and serving of models for Web2 and Web3 queries.

Once the model deployment is complete, developers can trigger model inference via Web3 API requests or directly through smart contracts. The inference network continuously runs trading strategies and feeds the results back to foundational smart contracts. If managing substantial community funds, proving the inference accuracy might be necessary. Upon receiving the inference results, the smart contracts automatically execute trades based on those results.

Asynchronization vs Synchronization

While asynchronous execution might theoretically offer better performance, it can complicate the developer experience.

In the asynchronous model, developers initially submit their job to the inference network via smart contracts. Once the job is completed, the network's smart contract returns the result. This divides the programming into two phases: invoking the inference and processing its results.

This separation can lead to complexities, especially with nested inference calls or extensive logic handling.

Moreover, asynchronous models can be challenging to integrate with existing smart contracts, requiring additional coding, extensive error handling, and added dependencies.

Synchronization is usually more straightforward for developers to implement, but it introduces challenges related to latency and blockchain design. For instance, when dealing with rapidly changing input data such as block time or market prices, the data can become outdated by the time the processing is complete. This scenario can cause smart contract executions to rollback, particularly when performing operations like swapping based on outdated prices.

Valence is tackling these challenges by focusing on AI infrastructure that operates asynchronously.

Reality

In the current landscape, most new inference networks like Ritual Network are still undergoing testing phases and offer limited functionality according to their public documentation. Instead of providing a cloud infrastructure for on-chain AI computations, they support a framework for self-hosting AI computations and subsequently relaying results to the blockchain.

Here's a typical architecture used for running an AIGC NFT: The diffusion model generates the NFT and uploads it to Arweave. The inference network then receives the Arweave address and proceeds to mint the NFT on-chain.

This model requires developers to deploy and host most of the infrastructure independently, which includes managing Ritual Nodes with customized service logic, stable diffusion nodes, and NFT smart contracts.

Recommendation: Current inference networks are complex to integrate and deploy custom models. As many do not offer verifiability at this stage, deploying AI on the frontend could be a more straightforward option for developers. For those requiring verifiability, the Zero Knowledge Machine Learning provider, Giza, offers a promising alternative.

Agent Network

Agent networks simplify the customization of agents for users. These networks consist of autonomous entities or smart contracts capable of executing tasks and interacting with each other and the blockchain autonomatically. They are currently more focused on large language models (LLMs), such as GPT chatbots designed specifically for understanding Ethereum. However, these chatbots are currently limited in their capacities, restricting developers from building complex applications on top of them.

Looking ahead, agent networks are poised to enhance their capabilities by providing agents with advanced tools, including external API access and execution functionalities. Developers will be able to orchestrate workflows by connecting multiple specialized agents—such as those focused on protocol design, Solidity development, code security reviews, and contract deployment—using prompts and contexts to facilitate cooperation.

Examples of agent networks include Flock.ai, Myshell, and Theoriq.

Recommendation: As current agent technologies are still evolving and possess limited functionalities, developers may find more mature orchestration tools such as Langchain or Llamaindex in the Web2 space more effective for their needs.

Difference between Agent Network and Inference Network

While both agent networks and inference networks serve to enhance the capabilities and interactions on the blockchain, their core functions and operational focus differ greatly. Agent networks are geared towards automating interactions and expanding the utility of smart contracts through autonomous agents. In contrast, inference networks are primarily concerned with integrating and managing AI-driven data analyses on the blockchain. Each serves a unique purpose, tailored to different aspects of blockchain and AI integration.

Agent networks are primarily focused on large language models (LLMs) and provide orchestration tools, such as Langchain, to facilitate the integration of these agents. For developers, this means there's no need to develop their own machine learning models from scratch. Instead, the complexity of model development and deployment is abstracted away by the inference network, allowing them to simply connect agents using appropriate tools and contexts. In most cases, end users interact directly with these agents, simplifying the user experience.

Conversely, the inference network forms the operational backbone of the agent network, granting developers lower-level access. Unlike agent networks, inference networks are not directly utilized by end users. Developers must deploy their models—which are not limited to LLMs—and they can access these models via offchain or onchain points.

Interestingly, agent networks and inference networks are beginning to converge in some instances, with vertically integrated products emerging that offer both agent and inference functionalities. This integration is logical as both functions share a similar infrastructure backbone.

Comparison of Inference and Agent Networks:

|

Inference Network |

Agent Network | |

|---|---|---|

|

Target Customers |

Developers |

End users/Developers |

|

Supported Models |

LLMs, Neural Networks, Traditional ML models |

Primarily LLMs |

|

Infrastructure |

Supports diverse models |

Primarily supports popular LLMs |

|

Customizability |

Broad model adaptability |

Configurable through prompts and tools |

|

Popular Projects |

Ritual, Valence |

Flock, Myshell, Theoriq, Olas |

Space for Next Genius Innovations

As we delve deeper into the model development pipeline, numerous opportunities emerge within web3 fields:

- Datasets: Transforming blockchain data into ML-ready datasets is crucial. Projects like Giza are making strides by providing high-quality, DeFi-related datasets. However, the creation of graph-based datasets, which more aptly represent blockchain interactions, is still an area in need of development.

- Model Storage: For large models, efficient storage, distribution, and versioning are essential. Innovators in this space include Filecoin, AR, and 0g.

- Model Training: Decentralized and verifiable model training remains a challenge. Entities like Gensyn, Bittensor, and Flock are making significant advancements.

- Monitoring: Effective infrastructure is necessary for monitoring model usage offchain and onchain, helping developers identify and rectify issues or biases in their models.

- RAG Infrastructure: With Retriever-augmented generation, the demand for private, efficient storage and computation increases. Firstbatch and Bagel are examples of projects addressing these needs.

- Dedicated Web3 Models: Tailored models are essential for specific Web3 use cases, such as fraud detection or price predictions. For instance, Pond is developing blockchain-oriented graph neural networks (GNNs).

- Evaluation Networks: The proliferation of agents necessitates robust evaluation mechanisms. Platforms like Neuronets are pivotal in providing such services.

- Consensus: Traditional Proof of Stake (PoS) may not suit AI-oriented tasks due to their complexity. Bittensor, for example, has developed a consensus model that rewards nodes for contributing valuable AI insights.

Future Outlook

The trend towards vertical integration is evident, where networks seek to provide comprehensive, multi-functional ML solutions from a single computational layer. This approach promises a streamlined, all-in-one solution for Web3 ML developers, propelling forward the integration of AI and blockchain technologies.

On-chain inference, while offering exceptional verifiability and seamless integration with backend systems like smart contracts, remains costly and slow. I envision a hybrid approach in the future. We're likely to see a blend where some inference tasks are performed offchain, on the frontend, for efficiency, while critical, decision-centric inference tasks will continue to be handled onchain. This paradigm is already in practice with mobile devices. Taking advantage of mobile capabilities, smaller models run locally for quick responses, while more complex computations are offloaded to the cloud, leveraging larger Language Models (LLMs).

如有侵权请联系:admin#unsafe.sh