2024-8-17 06:27:10 Author: hackernoon.com(查看原文) 阅读量:4 收藏

“Absolutely no one knows how to use ChatGPT properly. Not one!” — yells one copywriter who lost his long-term job a couple of weeks ago. The company for which he crafted texts within the last nine years switched to AI, which is not-so-ingenious but enormously cheaper. Nothing special, just business, they said.

Massive layoffs turn out to be just one side of the coin. The reverse side is that AI-generated content of a flawed quality became widespread with the speed of light. It instantly flooded the web and disrupted search results: Google proved to be unequipped to face this new challenge urgently.

As a result, many writing professionals claim AI content a pure evil and credit it with widely different crying shames, both reliable and not so much.

Below, I’ll fight the common misbeliefs about AI and how it works. Spoiler: most of them aren’t misbeliefs but real flaws, so I’ll show you how to overcome them with no sweat.

The Main AI Stigma

By far, the most popular prejudice is that AI can produce only miserable texts that make readers feel vomiting and force them to close the pages where these texts are published as soon as possible.

This stigma holds true but for the particular case of near-zero human effort only. A user, instead of researching, ideating, and sourcing, defines the topic with a couple of words and hits "Generate me an article!" As a result, magic doesn’t happen, and outputs are hardly called articles but thin valueless content pieces close to the specific topic and stuffed with relevant keywords.

But why the heck this practice became so widespread? Because this is exactly what people want: do less, produce more, and the quality is of secondary importance. Just take a look at the promises that various AI writing services give their potential users:

Jasper : Get a mind-blowing copy with AI — instantly generate high-quality copy for emails, ads, websites, listings, blogs & more.

Rytr : Rytr is an AI writing assistant that helps you create high-quality content, in just a few seconds, at a fraction of the cost!

ArticleForge : Get high-quality content in one click.

INK : Create content at a scale faster than ever before with INK's industry-leading SEO optimization and semantic intelligence.

The explanation of why this is exactly what happened is as easy as peanuts. Upon the emergence of these services, it was vitally clear that with such miserable outputs, they should hurry up on conquering the market until Google updates its ranking algorithms against content mass production.

This goal was achieved by aiming writing services at a broad audience without writing skills. Skilled writers like me (and others, I believe) found this approach fundamentally wrong. No wisdom can guess how many services of that kind I’ve estimated and abandoned in the first 10 minutes of usage.

Google’s March 2024 core update of their search algorithms just cut the cord by reducing low-quality, unoriginal results from search results.

Debunking Common AI Misbeliefs

Now that I've spilled the beans on how AI content creation has become a wildfire, let's tackle the misbeliefs that swirl around it. Spoiler alert: Some of these "misbeliefs" are closer to the truth than you might think, but that doesn’t mean they’re without solutions.

I’ve manually gathered all these pieces of evidence so it definitely won’t be boring!

Misbelief 1: AI-Generated Content Lacks Consistency and Quality

You’ve probably heard this one a million times: “AI can’t keep it together for more than a few paragraphs before everything goes haywire.” And you know what? That’s not entirely wrong.

As Bani Kaur bluntly puts it, "Almost every prompt breaks down after 3 paragraphs." Why does this happen? Well, AI models, for all their techy brilliance, aren’t exactly built to handle long-winded, thematic consistency like a seasoned writer. They lose the plot (literally) after a while, leading to disjointed and sometimes downright baffling content.

How to fix It: Don’t treat AI as your personal Shakespeare. Think of it as your first draft generator, not the final masterpiece. Start with AI to get the bones of your content, but then polish it up through good old-fashioned human editing. This way, you can maintain that high quality and consistent narrative flow that keeps your readers hooked from start to finish.

Misbelief 2: AI Can’t Generate Truly Original or Insightful Content

If I had a dollar for every time someone said AI is as original as a knockoff handbag, I’d be rolling in it. AI-generated content often gets slammed for being bland, unoriginal, and devoid of any real depth. And they’re not entirely wrong—AI regurgitates patterns it’s been fed, so coming up with a truly fresh idea? That’s a tall order. As Bani Kaur said, AI “can’t have original ideas or contrarian takes.”

How to fix It: Here’s the kicker—AI isn’t supposed to be a creative genius. Instead, use AI to do the heavy lifting when it comes to gathering and organizing data. Then, let your human brain do what it does best: infuse the content with creativity, unique perspectives, and that special sauce only you can bring. The result? A hybrid masterpiece that’s both insightful and original.

Misbelief 3: AI Content Lacks Authenticity and the Human Touch

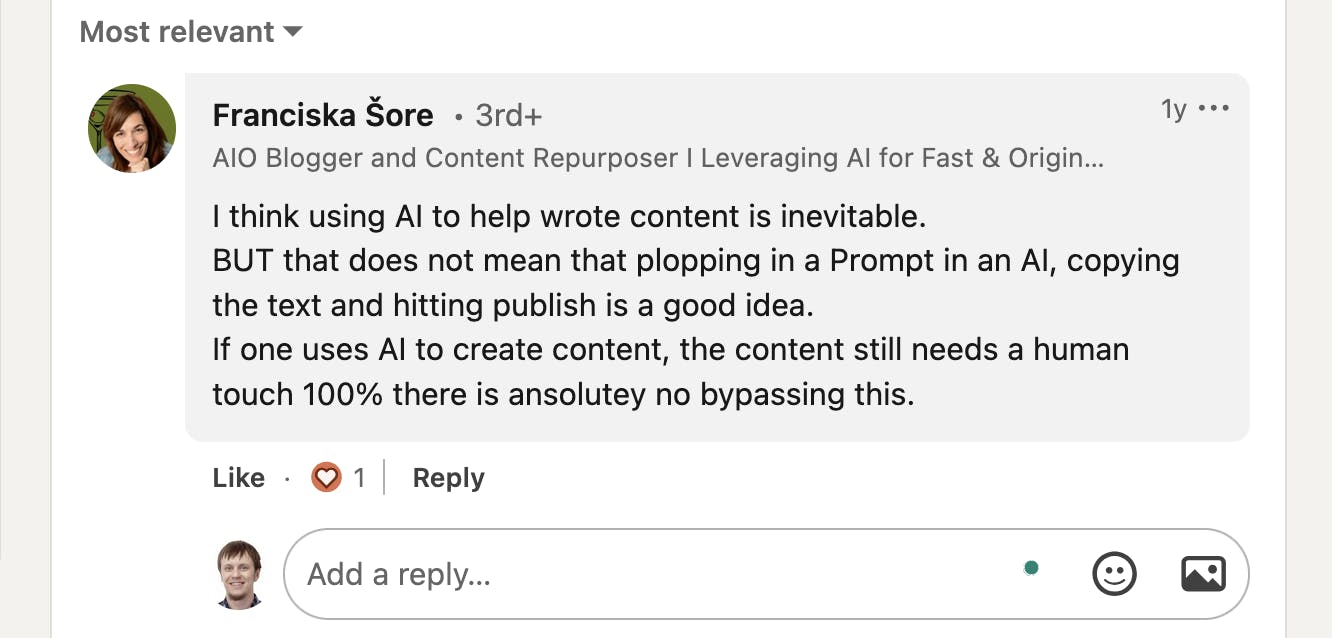

Ah, the old “AI is a robot and robots can’t feel” argument. It’s true—AI-generated content often feels as warm as a refrigerator, lacking the emotional depth and relatability that comes from genuine human experience. As Franciska Šore keenly observed, “If one uses AI to create content, the content still needs a human touch 100%—there is absolutely no bypassing this.”

How to fix It: The solution here is pretty straightforward: once you’re a human here, you should touch. Mix AI’s efficiency with your personal anecdotes, real-world examples, and a tone that resonates with your audience. That’s how you’ll turn cold, clinical AI output into something that feels authentic and relatable. No need to spend hours trying to make this cold content hot, most often more or less warm is enough.

Misbelief 4: AI Cannot Capture an Authentic Voice or Style

This one hits home for a lot of writers and brand managers. AI-generated content often sounds like it’s trying on someone else’s clothes—it just doesn’t fit right. Matt Gray nailed it when he said, “AI is great, but it's obvious too. A little human touch goes a long way.”

How to fix It: After you’ve let the AI do its thing, take the reins. Adjust the tone, tweak the language, and refine the structure to make sure the content speaks in the authentic voice of your brand or personal style. Remember, AI is a tool, not a replacement for the unique flair you bring to the table.

Misbelief 5: AI Often Produces Biased or Inaccurate Content

This one’s a doozy. AI, for all its smarts, sometimes gets it wrong—like, really wrong. It’s not uncommon for AI-generated content to include biased or misleading information, thanks to the flawed data it’s trained on. As Tom Hannemann highlighted, “ChatGPT generates responses based on patterns in the data it was trained on... it can inadvertently generate false or misleading content.”

How to fix It: Always fact-check. It’s as simple as that. Use a combination of AI tools to cross-verify the content, and then bring in human reviewers to ensure everything checks out. This multi-layered approach reduces the risk of spreading misinformation and keeps your content accurate and trustworthy.

I’ll cover this question in one of the next sections.

Misbelief 6: AI Will Replace Human Writers

If I had a nickel for every time someone said, “AI is coming for our jobs,” I’d have, well, a lot of nickels. There’s a real concern that as AI becomes more advanced, it could devalue the work of human writers and make it tougher to distinguish between machine and human-made content. Kiri Nowak-Smith remarked, “Having a machine write complete articles for us is never going to be a good thing.”

How to Fix It: If you ask for my opinion on this situation, I will answer “Not today!” Let’s get one thing straight—AI is a tool, not a replacement. Writers who know how to leverage AI while maintaining their unique voice and creativity will always be in demand. Embrace AI as a partner that enhances your work rather than fearing it as competition. Remember, there’s no substitute for the human touch.

By the way, I believe one of the next versions of AI will be overwhelmingly better than the current ones, so it will replace not only writers but all the people working intellectually. Check and mate!

Misbelief 7: AI Content Overproduction Contributes to Information Overload

Here’s the downside of AI’s efficiency—it can churn out so much content so quickly that it floods the internet with material, a lot of which is mediocre at best. Usman Hasan put it bluntly: “Producing the crap has become effortless, meaning more of it to sift through, making it harder to find anything of value.”

How to fix It: Quality over quantity, always. Use AI to streamline your process, but never at the expense of producing high-quality, well-researched content that truly adds value to your audience. Focus on creating content that stands out, rather than just adding to the noise.

And don’t overload yourself with the content, I believe you’re capable of doing that!

Content Pillar: The Solution to AI’s Flaws

Now that we’ve picked apart some of the common misbeliefs about AI, it’s time to shift gears and dive into the solution that can bridge the gap between AI’s capabilities and the content quality you’re aiming for.

Meet the Content Pillar—my secret weapon to overcoming AI’s limitations and building a content strategy that not only works but thrives. Here, I will show you how I'm addressing some issues, as well as showcase some valuable tricks you'll definitely like. Of course, only if you aren't one of these AI haters. You can check the upper page here.

Proven benefits:

- Aimed at scaled production. The Content Pillar approach is designed for efficient, high-volume content creation without sacrificing quality.

- Far better aligned with the readers than the traditional approach. It ensures that your content directly resonates with your target audience’s needs and interests.

- A synergy of Google juice. By interlinking content, the pillar creates a network that search engines love, boosting your site’s visibility and rankings. Being placed on a trusted enough domain, the content pillar can even outperform Wikipedia in the search engine result page.

- A centralized production of valuable insights is much more efficient than separate articles. Instead of scattering efforts across disparate pieces, this approach consolidates resources to produce deeper, more comprehensive insights.

- Planning-friendly. With a clear structure, it’s easier to plan, produce, and maintain a steady content flow.

- Consistent. Every piece of content under the pillar is on-brand, ensuring consistency in tone, quality, and messaging.

- Actionable SEO strategy in 2024. As search engine algorithms evolve, the pillar approach remains a forward-thinking, effective strategy to stay ahead in SEO.

Some Tricks Helping Me Overcome the Barriers

When it comes to using Large Language Models (LLMs) like GPT for content creation, the road isn't always smooth. Many writers face common challenges such as poor quality, lack of originality, and shallow content—issues that can easily arise if these tools aren't used correctly.

But through my experience and a bit of trial and error, I’ve developed a multistage, iterative approach that effectively addresses these challenges.

Trick 1. Avoiding One-Shot Content Creation

One common mistake is relying on LLMs for single-shot content generation. This means inputting a simple prompt and using the first draft as the final piece. Unfortunately, this approach often results in generic, low-quality content that lacks depth and engagement.

Instead of settling for the first draft, I use a multi-stage, iterative process:

- Researching. My first step in content creation is always thorough research. No matter writing on your own or leveraging LLM, the more you know about the topic you’re writing, the easier your ride and better result.

- Outline ideation. Shame on me but just 3 years ago I didn’t do this very important stage. I believe it’s clear to everyone who’s reading that it’s a good idea to agree with your article on the shore.

- Extra input collection. Before writing, I’m searching for additional references to enforce my outline.

- Initial drafting. I start by generating an initial draft using an LLM. When put into a strict framework performs far more consistently than without limitations.

- Refinement process. I then iteratively refine the content, layering in additional research, personal insights, and strategic adjustments to enhance quality and relevance.

- Human+machine editing. I use AI tools for simple repetitive tasks, like applying specific formatting or replacing specific words with acceptable substitutes.

This approach mirrors the steps I outlined in my 14-step process, where I advocate for generating content section by section, and then adjusting the text structure and formatting as needed to optimize the output.

Trick 2. Leveraging AI for Research, Not Just Writing

Another common misstep is using LLMs solely for writing, neglecting their potential to assist in the research phase. This can lead to factually weak or superficial content.

Here is how I handle it:

- I use AI to quickly gather and summarize information from various sources, building a rich data pool to inform my content. Using several different sources is another layer against errors.

- I have a special tool for discussing my ideas and helping develop them. Feel free to use it, too:

https://chatgpt.com/g/g-A8czR3zIM-solvermachine - Another tool is simply for searching the web. Don’t think it’s a trivial task not worth a separate custom GPT:

https://chatgpt.com/g/g-KJmkosqpI-proofsupplier - To ensure accuracy, I apply AI to cross-check facts, combining this with human oversight to produce content that is both creative and reliable.

How I approach backing up the whole pillar cluster with statistics and facts:

- I prefer to find and download 5—7 PDF files with industrial research by reputable research companies.

- Another tool is aimed especially at analyzing these PDFs and listing identified statements which then can be used as usual with such info.

Trick 3. Target Audience Is Prioritized Instead of SEO Keywords

Many content creators mistakenly prioritize SEO keywords over the actual needs and interests of their target audience. This often results in content that is keyword-rich but fails to resonate with readers, leading to poor engagement and high bounce rates.

For example, some marketers in IT mistakenly attribute the keywords containing the word “software” to outsourcing companies. However, this word clearly shows that the users are searching for ready-made software, not for software development services.

Instead of starting by gathering the semantic core, I do the next:

- I usually start with analyzing the target audience. It’s challenging but only for the first time. Once you have certain experience in this research, you can easily identify buyer personas just by reviewing the offer of your client.

- Then, I’m seeking the champions — LinkedIn users that ideally fit the selected personas. The rule of thumb I’ve set is to gather at least 50 champions. The selection criteria, apart from relevance to personas, is being active in commenting.

- I have a specific Selenium script that does all the dirty job for me: visits champions’ profiles, scrolls their comments until the end, and grabs all the comments into a TXT file.

- This is quite a meaningful source of buyer behavior data. I have another one, even more valuable, but I’d prefer to keep it secret.

- Finally, after analyzing these gathered logs I have significant amounts of data about further audience of my content.

- By prioritizing your audience’s needs, SEO naturally falls into place. When content is genuinely valuable and relevant, it tends to perform better in search engines, as engagement metrics improve (like time on page and lower bounce rates). In my case, about 80% of ideated topics then pass the keyword validation.

Trick 4. Synthesizing Valuable Insights From Scratch

AI-generated content is often criticized for being vague, superficial, and lacking in-depth analysis. This is because AI typically generates content based on existing information without truly synthesizing new insights.

This is one of the most challenging aspects: a lot of my colleagues believe this is what AI cannot do in any way. But it can, with my help, of course.

- First of all, this approach works overwhelmingly better within the entire pillar cluster rather than for a single article.

- My special tool Tipster analyzes TA segment descriptions and extracts their pain points.

- Next, it also analyzes the articles within the pillar and extracts common outputs these articles describe.

- After that, Tipster conducts matchmaking and forms pairs of “pain point—fitting output”, as well as suggests simple steps to solutions.

- I review these suggestions both manually and using specific tools, and refine the process to make it potentially more efficient.

- Finally, all the described above form quite good references for generating quite decent valuable insights that no one still suspected of being synthesized.

Trick 5. Addressing AI Hallucinations

AI hallucinations occur when models generate content that is factually incorrect or entirely fabricated, which can severely undermine the credibility of the content.

Did you know that the chance for bias when working with grounded inputs, drops below the level you’re worrying about? Studies have shown that grounding AI outputs in verified data sources can reduce the occurrence of "hallucinations" (where the AI generates incorrect or nonsensical information) by up to 30-40%.

Add architectural measures like using the unified data format within a single dialogue, as well as output templates or any other stabilizers of such a kind. Finally, combine this set of measures with the weighted assessment of AI outputs, you will be surprised not to spend any hallucination or bias episode for a while.

My combined approach along with preliminary calculations:

-

Let’s assume the baseline error rate for a typical LLM without grounding or post-checking is around 20%. This includes factual inaccuracies, biases, and other types of errors.

-

Using grounded data, meaning relying on verified, high-quality sources can reduce the error rate significantly. Grounding typically reduces errors by about 50%, so the error rate drops to 10%.

-

Implementing a multilayered fact-checking process, where different AI models verify each other’s outputs, could further reduce errors by another 40%. This brings the error rate down to 6%.

-

I ensure all inputs are uniform, and sourced from consistent, high-quality data. This uniformity reduces variability in the data, leading to more consistent and reliable AI outputs. By reducing data variability, the error rate decreases by 10-15%, lowering it from 6% to approximately 5.1-5.4%.

-

I apply a strict framework for processing inputs, including standardized methods for data retrieval, filtering, and integration. This controlled processing minimizes the risk of errors during data handling, contributing to an additional 5-10% reduction, bringing the error rate down to 4.6-4.9%.

-

Finally, having human editors review the content can reduce errors by an additional 80%. Human judgment catches nuanced or complex issues that AI might miss, reducing the error rate to approximately 0.92-1.0%.

Sounds cool, huh? I believe 1% is lower than the chance of human error when processing any documents.

Wrapping Up

I hope you like what I’ve described here. AI is just a tool, don’t make it a monster. In my opinion, this tool is rather helpful, and people who undervalue it without visible reasons are just afraid of it irrationally.

Here is a quick treatment for it:

— Access ChatGPT

— Try to do something from what I’ve described

And I believe your attitude might change quite fast. Good luck!

如有侵权请联系:admin#unsafe.sh