2024-9-9 00:0:15 Author: hackernoon.com(查看原文) 阅读量:16 收藏

So, you’ve been playing around with Large Language Models and are beginning to integrate Generative AI into your apps? That’s awesome! But let’s be real. LLMs don’t always behave the way we want them to. They are like evil toddlers with minds of their own!

You soon realize that simple prompt chains are just not enough. Sometimes, we need something more. Sometimes, we need multi-agent workflows! That’s where AutoGen comes in.

Let’s take an example. Imagine you are making a note-taking app (Clearly, the world doesn’t have enough of them. 😝). But hey, we want to do something special. We want to take the simple, raw note that a user gives us and turn it into a fully restructured document, complete with a summary, an optional title, and an automated to-do list of tasks. And we want to do all of this without breaking a sweat - well, for your AI agents at least.

Okay, now, I know what you are thinking - “Aren’t these like rookie programs?” To that, I say, you are right. Mean… but right! But don’t be fooled by the simplicity of the workflow. The skills you’ll learn here - like handling AI agents, implementing workflow control, and managing conversation history - will help you take your AI game to the next level.

So buckle up, because we are going to learn how to make AI workflows using AutoGen!

Before we start, note that you can find a link to all the source code on GitHub.

Building Custom Workflows

Let’s start with the first use-case - “Generating a summary for the note followed by a conditional title”. To be fair, we don’t really need to use agents here. But hey, we got to start somewhere right?

Step 1: Creating Our Base LLM Config

Agentic frameworks like AutoGen always require us to configure the model parameters. We’re talking about the model and fallback model to use, the temperature, and even settings like timeout and caching. In the case of AutoGen, that setting looks something like this:

# build the gpt_configuration object

base_llm_config = {

"config_list": [

{

"model": "Llama-3-8B-Instruct",

"api_key": os.getenv("OPENAI_API_KEY"),

"base_url": os.getenv("OPENAI_API_URL"),

}

],

"temperature": 0.0,

"cache_seed": None,

"timeout": 600,

}

As you can see, I’m a huge open-source AI fanboy and swear by Llama 3. You can make AutoGen point to any OpenAI-compatible inference server by simply configuring the api_key and base_url values. So, feel free to use Groq, Together.ai, or even vLLM to host your model locally. I’m using Inferix.

It’s really that easy!

I’m curious! Would you be interested in a similar guide for open-source AI hosting? Let me know in the comments.

Step 2: Creating Our Agents

Initializing conversational agents in AutoGen is pretty straightforward; simply supply the base LLM config along with a system message and you are good to go.

import autogen

def get_note_summarizer(base_llm_config: dict):

# A system message to define the role and job of our agent

system_message = """You are a helpful AI assistant.

The user will provide you a note. Generate a summary describing what the note is about. The summary must follow the provided "RULES".

"RULES":

- The summary should be not more than 3 short sentences.

- Don't use bullet points.

- The summary should be short and concise.

- Identify and retain any "catchy" or memorable phrases from the original text

- Identify and correct all grammatical errors.

- Output the summary and nothing else."""

# Create and return our assistant agent

return autogen.AssistantAgent(

name="Note_Summarizer", # Lets give our agent a nice name

llm_config=base_llm_config, # This is where we pass the llm configuration

system_message=system_message,

)

def get_title_generator(base_llm_config: dict):

# A system message to define the role and job of our agent

system_message = """You are a helpful AI assistant.

The user will provide you a note along with a summary. Generate a title based on the user's input.

The title must be witty and easy to read. The title should accurate present what the note is about. The title must strictly be less than 10 words. Make sure you keep the title short.

Make sure you print the title and nothing else.

"""

# Create and return our assistant agent

return autogen.AssistantAgent(

name="Title_Generator",

llm_config=base_llm_config,

system_message=system_message,

)

The most important part of creating agents is the system_message. Take a moment to look at the system_message I have used to configure my agents.

It’s important to remember that the way AI Agents in AutoGen work is by participating in a conversation. The way they interpret and carry the conversation forward completely depends on the system_message they are configured with. This is one of the places where you will be spending some time to get things right.

We need just one more agent. An agent to act as a proxy for us humans. An agent which can initiate the conversation with the “note” as its initial prompt.

def get_user():

# A system message to define the role and job of our agent

system_message = "A human admin. Supplies the initial prompt and nothing else."

# Create and return our user agent

return autogen.UserProxyAgent(

name="Admin",

system_message=system_message,

human_input_mode="NEVER", # We don't want interrupts for human-in-loop scenarios

code_execution_config=False, # We definitely don't want AI executing code.

default_auto_reply=None,

)

There is nothing fancy going on here. Just note that I’ve set the default_auto_reply parameter to None. That's important. Setting this to none makes sure the conversation ends whenever the user agent is sent a message.

Oops, I totally forgot to create those agents. Let’s do that real quick.

# Create our agents

user = get_user()

note_summarizer = get_note_summarizer(base_llm_config)

title_generator = get_title_generator(base_llm_config)

Step 3: Setup Agent Coordination Using a GroupChat

The final piece of the puzzle is making our agents coordinate. We need to determine the sequence of their participation and decide which agents should.

Okay, that’s more than one piece. But you get the point! 🙈

One possible solution would be to let AI figure out the sequence in which the Agents participate. This is not a bad idea. In fact, this is my go-to option when dealing with complex problems where the nature of the workflow is dynamic.

However, this approach has its drawbacks. Reality strikes again! The agent responsible for making these decisions often needs a large model, resulting in higher latencies and costs. Additionally, there’s a risk that it might make incorrect decisions.

For deterministic workflows, where we know the sequence of steps ahead of time, I like to grab the reins and steer the ship myself. Luckily, AutoGen supports this use case with a handy feature called GroupChat.

from autogen import GroupChatManager

from autogen.agentchat.groupchat import GroupChat

from autogen.agentchat.agent import Agent

def get_group_chat(agents, generate_title: bool = False):

# Define the function which decides the agent selection order

def speaker_selection_method(last_speaker: Agent, group_chat: GroupChat):

# The admin will always forward the note to the summarizer

if last_speaker.name == "Admin":

return group_chat.agent_by_name("Note_Summarizer")

# Forward the note to the title generator if the user wants a title

if last_speaker.name == "Note_Summarizer" and generate_title:

return group_chat.agent_by_name("Title_Generator")

# Handle the default case - exit

return None

return GroupChat(

agents=agents,

messages=[],

max_round=3, # There will only be 3 turns in this group chat. The group chat will exit automatically post that.

speaker_selection_method=speaker_selection_method,

)

Imagine a GroupChat as a WhatsApp group where all the agents can chat and collaborate. This setup lets agents build on each other’s work. The GroupChat class along with a companion class called the GroupChatManager acts like the group admins, keeping track of all the messages each agent sends to ensure everyone stays in the loop with the conversation history.

In the above code snippet, we have created a GroupChat with a custom speaker_selection_method. The speaker_selection_method allows us to specify our custom workflow. Here’s a visual representation of the same.

Since the speaker_selection_method is essentially a Python function, we can do whatever we want with it! This helps us create some really powerful workflows. For example, we could:

- Pair up agents to verify each other’s work.

- Involve a “Troubleshooter” agent if any of the previous agents output an error.

- Trigger webhooks to external systems to inform them of the progress made.

Imagine the possibilities! 😜

Step 4: Setting It All Up and Initiating the Conversation

The last step is creating an instance of the GroupChat, wrapping it up inside a GroupChatManager and initiating the conversation.

# Create our group chat

groupchat = get_group_chat([user, note_summarizer, title_generator], generate_title=True)

manager = autogen.GroupChatManager(groupchat=groupchat, llm_config=base_llm_config)

# Start the chat

user.initiate_chat(

manager,

clear_history=True,

message=note,

)

Note: The user is chatting with the

GroupChatManager, not the individual agents. It has no clue which agents will join the conversation to provide the final reply. Sneaky, right?

The output will look something like this:

Admin (to chat_manager):

Note:

Convo with editor:

- discuss titles and thumbnails

- discuss video editing tips tracker

- Zeeshan presents the tracker

- what trick helps with what

- he decidedls if we can experiment with something new

- make sure all all videos since how config management works in k8s are backed u

- make list of YouTube thumbnail templates

- make list of YouTube idea generation limits

--------------------------------------------------------------------------------

Next speaker: Note_Summarizer

Note_Summarizer (to chat_manager):

The note is about a conversation with an editor regarding video production. They discussed titles and thumbnails, as well as a video editing tips tracker presented by Zeeshan, which highlights tricks for specific tasks. Additionally, they ensured that all videos on Kubernetes configuration management are backed up and created lists of YouTube thumbnail templates and idea generation limits.

--------------------------------------------------------------------------------

Next speaker: Title_Generator

Title_Generator (to chat_manager):

"Video Production Chat: Titles, Thumbnails, and Editing Tips"

--------------------------------------------------------------------------------

Taking Control Of The Conversation

Next up, we’ll dive into the final use case: taking a given “note,” restructuring it for better clarity, and then creating a task list for the user.

Here’s how we are going to go about it:

We will start by identifying a list of topics covered in the note. This list is the driving force behind the entire process. It establishes the sections for the reformatted note and determines the level of detail for our generated tasks.

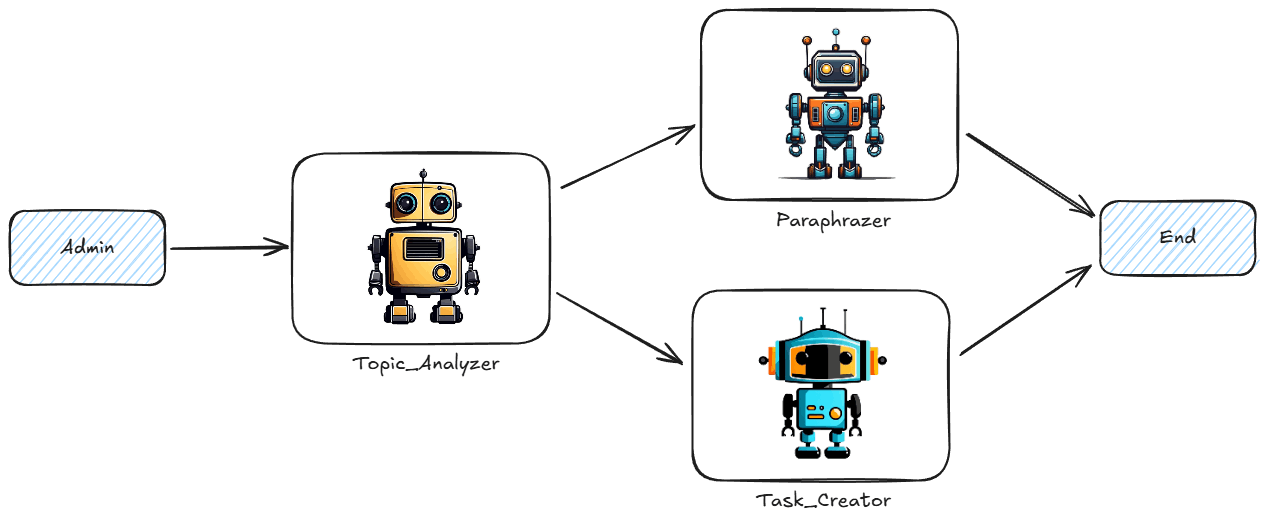

There is just one small problem. The Paraphrazerand Task_Creator agent don't really care about each other’s output. They only care about the output of the Topic_Analyzer.

So, we need a way to keep these agents’ responses from cluttering up the conversation history, or it’ll be complete chaos. We’ve already taken control of the workflow; now, it’s time to be the boss of the conversation history as well! 😎

Step 1: Creating the Agents

First things first, we need to set up our agents. I’m not going to bore you with the details so here’s the code:

def get_topic_analyzer(base_llm_config: dict):

# A system message to define the role and job of our agent

system_message = """You are a helpful AI assistant.

The user will provide you a note. Generate a list of topics discussed in that note. The output must obey the following "RULES":

"RULES":

- Output should only contain the important topics from the note.

- There must be atleast one topic in output.

- Don't reuse the same text from user's note.

- Don't have more than 10 topics in output."""

# Create and return our assistant agent

return autogen.AssistantAgent(

name="Topic_Analyzer",

llm_config=base_llm_config,

system_message=system_message,

)

def get_paraphrazer(base_llm_config: dict):

# A system message to define the role and job of our agent

system_message = """You are a helpful AI content editor.

The user will provide you a note along with a summary.

Rewrite that note and make sure you cover everything in the note. Do not include the title. The output must obey the following "RULES":

"RULES":

- Output must be in markdown.

- Make sure you use each points provided in summary as headers.

- Each header must start with `##`.

- Headers are not bullet points.

- Each header can optionally have a list of bullet points. Don't put bullet points if the header has no content.

- Strictly use "-" to start bullet points.

- Optionally make an additional header named "Addional Info" to cover points not included in the summary. Use "Addional Info" header for unclassified points.

- Identify and correct spelling & grammatical mistakes."""

# Create and return our assistant agent

return autogen.AssistantAgent(

name="Paraphrazer",

llm_config=base_llm_config,

system_message=system_message,

)

def get_tasks_creator(base_llm_config: dict):

# A system message to define the role and job of our agent

system_message = """You are a helpful AI personal assistant. The user will provide you a note along with a summary.

Identify each task the user has to do as next steps. Make sure to cover all the action items mentioned in the note.

The output must obey the following "RULES":

"RULES":

- Output must be an YAML object with a field named tasks.

- Make sure each task object contains fields title and description.

- Extract the title based on the tasks the user has to do as next steps.

- Description will be in markdown format. Feel free to include additional formatting and numbered lists.

- Strictly use "-" or "dashes" to start bullet points in the description field.

- Output empty tasks array if no tasks were found.

- Identify and correct spelling & grammatical mistakes.

- Identify and fix any errors in the YAML object.

- Output should strictly be in YAML with no ``` or any additional text."""

# Create and return our assistant agent

return autogen.AssistantAgent(

name="Task_Creator",

llm_config=base_llm_config,

system_message=system_message,

)

Step 2: Create a Custom GroupChat

Unfortunately. AutoGen does not allow us to control the conversation history directly. So, we need to go ahead and extend the GroupChat class with our custom implementation.

class CustomGroupChat(GroupChat):

def __init__(self, agents):

super().__init__(agents, messages=[], max_round=4)

# This function get's invoked whenever we want to append a message to the conversation history.

def append(self, message: Dict, speaker: Agent):

# We want to skip messages from the Paraphrazer and the Task_Creator

if speaker.name != "Paraphrazer" and speaker.name != "Task_Creator":

super().append(message, speaker)

# The `speaker_selection_method` now becomes a function we will override from the base class

def select_speaker(self, last_speaker: Agent, selector: AssistantAgent):

if last_speaker.name == "Admin":

return self.agent_by_name("Topic_Analyzer")

if last_speaker.name == "Topic_Analyzer":

return self.agent_by_name("Paraphrazer")

if last_speaker.name == "Paraphrazer":

return self.agent_by_name("Task_Creator")

# Return the user agent by default

return self.agent_by_name("Admin")

We override two functions from the base GroupChat class:

append- This controls what messages are added to the conversation history.select_speaker- This is another way to specify thespeaker_selection_method.

But wait, on diving deeper into AutoGen’s code, I realized that the GroupChatManager makes each agent maintain the conversation history as well. Don’t ask me why. I really don’t know!

So, let’s extend the GroupChatManager as well to fix that:

class CustomGroupChatManager(GroupChatManager):

def __init__(self, groupchat, llm_config):

super().__init__(groupchat=groupchat, llm_config=llm_config)

# Don't forget to register your reply functions

self.register_reply(Agent, CustomGroupChatManager.run_chat, config=groupchat, reset_config=GroupChat.reset)

def run_chat(

self,

messages: Optional[List[Dict]] = None,

sender: Optional[Agent] = None,

config: Optional[GroupChat] = None,

) -> Union[str, Dict, None]:

"""Run a group chat."""

if messages is None:

messages = self._oai_messages[sender]

message = messages[-1]

speaker = sender

groupchat = config

for i in range(groupchat.max_round):

# set the name to speaker's name if the role is not function

if message["role"] != "function":

message["name"] = speaker.name

groupchat.append(message, speaker)

if self._is_termination_msg(message):

# The conversation is over

break

# We do not want each agent to maintain their own conversation history history

# broadcast the message to all agents except the speaker

# for agent in groupchat.agents:

# if agent != speaker:

# self.send(message, agent, request_reply=False, silent=True)

# Pro Tip: Feel free to "send" messages to the user agent if you want to access the messages outside of autogen

for agent in groupchat.agents:

if agent.name == "Admin":

self.send(message, agent, request_reply=False, silent=True)

if i == groupchat.max_round - 1:

# the last round

break

try:

# select the next speaker

speaker = groupchat.select_speaker(speaker, self)

# let the speaker speak

# We'll now have to pass their entire conversation of messages on generate_reply

# Commented OG code: reply = speaker.generate_reply(sender=self)

reply = speaker.generate_reply(sender=self, messages=groupchat.messages)

except KeyboardInterrupt:

# let the admin agent speak if interrupted

if groupchat.admin_name in groupchat.agent_names:

# admin agent is one of the participants

speaker = groupchat.agent_by_name(groupchat.admin_name)

# We'll now have to pass their entire conversation of messages on generate_reply

# Commented OG code: reply = speaker.generate_reply(sender=self)

reply = speaker.generate_reply(sender=self, messages=groupchat.messages)

else:

# admin agent is not found in the participants

raise

if reply is None:

break

# The speaker sends the message without requesting a reply

speaker.send(reply, self, request_reply=False)

message = self.last_message(speaker)

return True, None

I’ve made some minor edits to the original implementation. You should be able to follow the comments to know more.

But there is one thing I really want to emphasize. You can override the GroupChatManager’s “run_chat” method to plugin in your own workflow engine like Apache Airflow or Temporal. Practitioners of distributed systems know exactly how powerful this capability is!

Step 3: Setting It All Up and Initiating the Conversation

We set this all up like the previous example and watch this baby purrr! 🐱

# Create our agents

user = get_user()

topic_analyzer = get_topic_analyzer(base_llm_config)

paraphrazer = get_paraphrazer(base_llm_config)

task_creator = get_tasks_creator(base_llm_config)

# Create our group chat

groupchat = CustomGroupChat(agents=[user, topic_analyzer, paraphrazer, task_creator])

manager = CustomGroupChatManager(groupchat=groupchat, llm_config=base_llm_config)

# Start the chat

user.initiate_chat(

manager,

clear_history=True,

message=note,

)

# Lets print the count of tasks just for fun

chat_messages = user.chat_messages.get(manager)

if chat_messages is not None:

for message in chat_messages:

if message.get("name") == "Task_Creator":

taskList = yaml.safe_load(message.get("content")) # type: ignore

l = len(taskList.get("tasks"))

print(f"Got {l} tasks from Task_Creator.")

The output will look something like this:

Admin (to chat_manager):

Note:

Convo with editor:

- discuss titles and thumbnails

- discuss video editing tips tracker

- Zeeshan presents the tracker

- what trick helps with what

- he decidedls if we can experiment with something new

- make sure all all videos since how config management works in k8s are backed u

- make list of YouTube thumbnail templates

- make list of YouTube idea generation limits

--------------------------------------------------------------------------------

Topic_Analyzer (to chat_manager):

Here is the list of topics discussed in the note:

1. Titles

2. Thumbnails

3. Video editing tips

4. Config management in Kubernetes (k8s)

5. YouTube thumbnail templates

6. YouTube idea generation limits

--------------------------------------------------------------------------------

Paraphrazer (to chat_manager):

Here is the rewritten note in markdown format:

## Titles

- Discuss titles and thumbnails with the editor

## Video Editing Tips Tracker

### Zeeshan presents the tracker

- What trick helps with what

- He decides if we can experiment with something new

## Config Management in Kubernetes (k8s)

- Make sure all videos since how config management works in k8s are backed up

## YouTube Thumbnail Templates

- Make a list of YouTube thumbnail templates

## YouTube Idea Generation Limits

- Make a list of YouTube idea generation limits

## Additional Info

- Discuss video editing tips tracker with Zeeshan

- Present the tracker and decide if we can experiment with something new

--------------------------------------------------------------------------------

Task_Creator (to chat_manager):

tasks:

- title: Discuss Titles and Thumbnails

description: >-

- Discuss titles and thumbnails with the editor

This task involves having a conversation with the editor to discuss the titles and thumbnails for the videos.

- title: Discuss Video Editing Tips Tracker

description: >-

- Zeeshan presents the tracker

- Discuss what trick helps with what

- Decide if we can experiment with something new

This task involves discussing the video editing tips tracker presented by Zeeshan, understanding what tricks help with what, and deciding if it's possible to experiment with something new.

- title: Back up All Videos Since How Config Management Works in k8s

description: >-

- Make sure all videos since how config management works in k8s are backed up

This task involves ensuring that all videos related to config management in Kubernetes (k8s) are backed up.

- title: Create List of YouTube Thumbnail Templates

description: >-

- Make list of YouTube thumbnail templates

This task involves creating a list of YouTube thumbnail templates.

- title: Create List of YouTube Idea Generation Limits

description: >-

- Make list of YouTube idea generation limits

This task involves creating a list of YouTube idea generation limits.

--------------------------------------------------------------------------------

Got 5 tasks from Task_Creator.

Yup. Welcome to the age of AI giving us hoomans work to do! (Where did it all go wrong? 🤷♂️)

Conclusion

Building Generative AI-driven applications is hard. But it can be done with the right tools. To summarize:

- AI agents unlock this amazing capability of modeling complex problems as conversations.

- If done right, it can help make our AI applications more deterministic and reliable.

- Tools like AutoGen provide us with a framework with simple abstractions to build AI Agents.

As the next steps, you can check the following resources to dive deeper into the world of AI agents:

如有侵权请联系:admin#unsafe.sh